MAIXAI4TRUST: Multimodal, Affective and Interactive eXplainable AI for Trust

Recent socio-economical reports point to an increase in the perception of AIs potential impact on everyday life and to a generalized nervousness toward AI services. Such perceived AI risks may make people not trust AI. Explainable AI (XAI) contributes to the development of Responsible and Trustworthy AI, given that explainability is a prerequisite to endow intelligent systems with trustworthiness and that XAI systems are expected to naturally interact with humans, thus providing comprehensible explanations. Nevertheless, there is a lack of methods in the current state of the art that allow interactively tailoring human-centered explanations to the (evolving) needs of users and measuring the effectiveness of the provided explanations in terms of enhancing user understanding. By putting together concepts and tools from human-computer interaction (HCI) and AI research fields, this project will provide the scientific community with a XAI toolset for creating trustworthy explanations supported by three main pillars: multimodal, affective and interactive XAI methods. This leads to the definition of the corresponding aligned objectives: (O1) To design, develop, and validate techniques and algorithms to handle multimodal processing of data (involving textual, visual, temporal series data and a combination of multiple data sources); (O2) to design, develop, and validate techniques and algorithms to enable emotion-aware interactions; (O3) to design, develop, and validate an LLM-enhanced dialogue-based infrastructure integrating multimodal and affective conversational facilities for trustworthy explanatory dialogues; and (O4) to empirically evaluate the effectiveness and usability of the toolset in assisting researchers to build prototypes for two different socially relevant interactive contexts which are related to healthy aging (i.e.,cognitive stimulation in elderly and education for better nutrition).

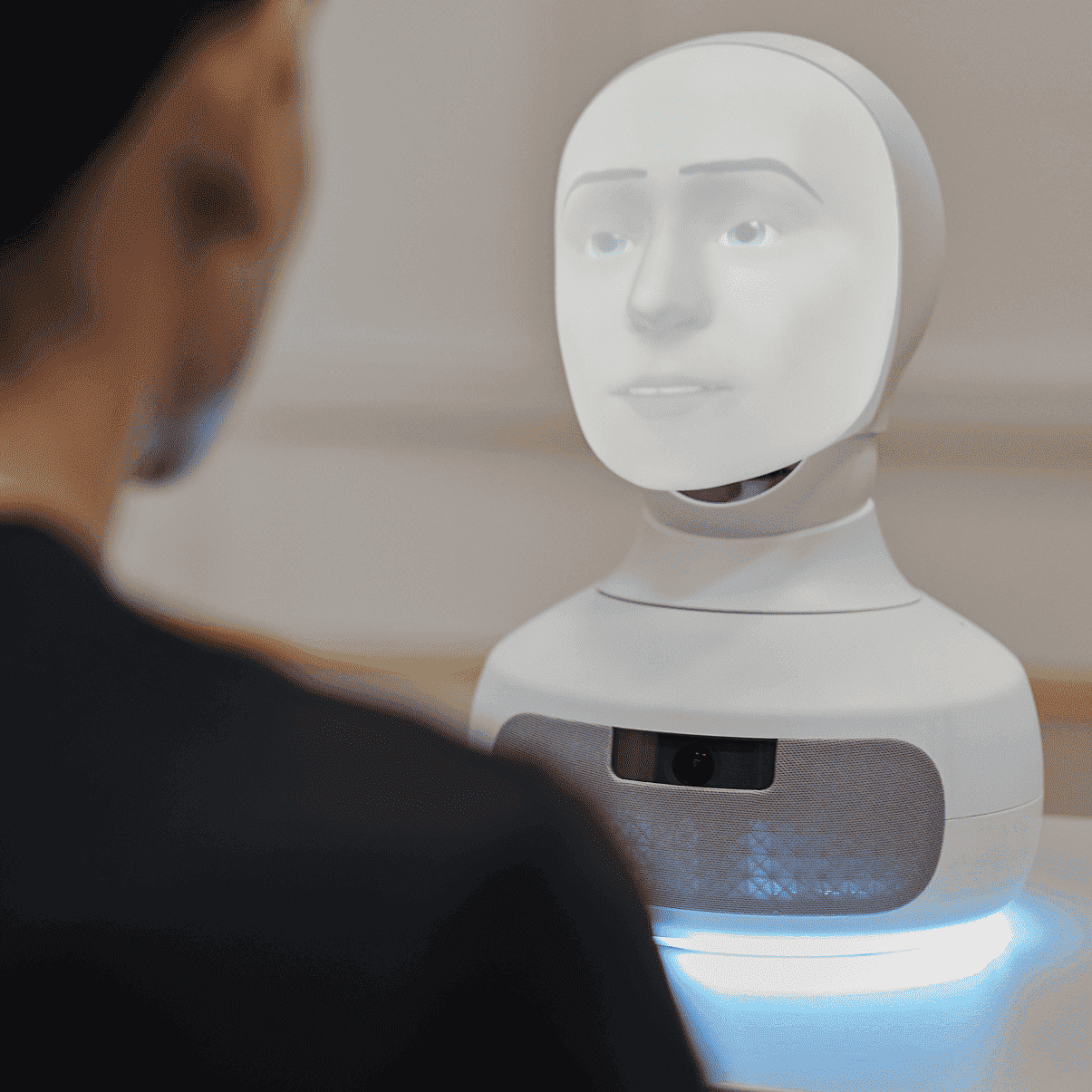

In a nutshell, the MAIXAI4TRUST project will, firstly, contribute to the research field with a toolset, including algorithms and techniques, to facilitate generating and validating more trustworthy AI socio-technical systems enhanced by multimodal, affective, and interactive XAI methods. Secondly, based on the toolset, the project will specifically investigate how to endow embodied AI conversational agents with the capacity for handling multimodal, affective, and interactive user context and thus delivering enhanced reliable explanatory dialogues based on LLMs and other generative AI models in combination with XAI techniques. The conversational agents will be implemented with two different embodiments (i.e., a chat-based graphical user interface and a social robot) to investigate how they may affect trustworthiness and design issues in the human-AI interactions and XAI techniques. Thirdly, the project will validate technical prototypes in two socially relevant interactive contexts, evaluating to which extent they succeed in leading to more trustworthy explanatory and successful human-AI interactions.

Project

/research/projects/ia-explicable-multimodal-afectiva-e-interactiva-para-a-confianza

<p>Recent socio-economical reports point to an increase in the perception of AIs potential impact on everyday life and to a generalized nervousness toward AI services. Such perceived AI risks may make people not trust AI. Explainable AI (XAI) contributes to the development of Responsible and Trustworthy AI, given that explainability is a prerequisite to endow intelligent systems with trustworthiness and that XAI systems are expected to naturally interact with humans, thus providing comprehensible explanations. Nevertheless, there is a lack of methods in the current state of the art that allow interactively tailoring human-centered explanations to the (evolving) needs of users and measuring the effectiveness of the provided explanations in terms of enhancing user understanding. By putting together concepts and tools from human-computer interaction (HCI) and AI research fields, this project will provide the scientific community with a XAI toolset for creating trustworthy explanations supported by three main pillars: multimodal, affective and interactive XAI methods. This leads to the definition of the corresponding aligned objectives: (O1) To design, develop, and validate techniques and algorithms to handle multimodal processing of data (involving textual, visual, temporal series data and a combination of multiple data sources); (O2) to design, develop, and validate techniques and algorithms to enable emotion-aware interactions; (O3) to design, develop, and validate an LLM-enhanced dialogue-based infrastructure integrating multimodal and affective conversational facilities for trustworthy explanatory dialogues; and (O4) to empirically evaluate the effectiveness and usability of the toolset in assisting researchers to build prototypes for two different socially relevant interactive contexts which are related to healthy aging (i.e.,cognitive stimulation in elderly and education for better nutrition).</p><p>In a nutshell, the MAIXAI4TRUST project will, firstly, contribute to the research field with a toolset, including algorithms and techniques, to facilitate generating and validating more trustworthy AI socio-technical systems enhanced by multimodal, affective, and interactive XAI methods. Secondly, based on the toolset, the project will specifically investigate how to endow embodied AI conversational agents with the capacity for handling multimodal, affective, and interactive user context and thus delivering enhanced reliable explanatory dialogues based on LLMs and other generative AI models in combination with XAI techniques. The conversational agents will be implemented with two different embodiments (i.e., a chat-based graphical user interface and a social robot) to investigate how they may affect trustworthiness and design issues in the human-AI interactions and XAI techniques. Thirdly, the project will validate technical prototypes in two socially relevant interactive contexts, evaluating to which extent they succeed in leading to more trustworthy explanatory and successful human-AI interactions.</p> - PID2024-157680NB-I00 - José María Alonso Moral, Alejandro Catalá Bolós - Paulo Félix Lamas, Nelly Condori Fernández, Jesús María Rodríguez Presedo

projects_en